3rd Workshop on

Visualization for AI Explainability

October 26, 2020 at IEEE VIS in Virtual (originally Salt Lake City, Utah)

The role of visualization in artificial intelligence (AI) gained significant attention in recent years. With the growing complexity of AI models, the critical need for understanding their inner-workings has increased. Visualization is potentially a powerful technique to fill such a critical need.

The goal of this workshop is to initiate a call for 'explainables' / 'explorables' that explain how AI techniques work using visualization. We believe the VIS community can leverage their expertise in creating visual narratives to bring new insight into the often obfuscated complexity of AI systems.

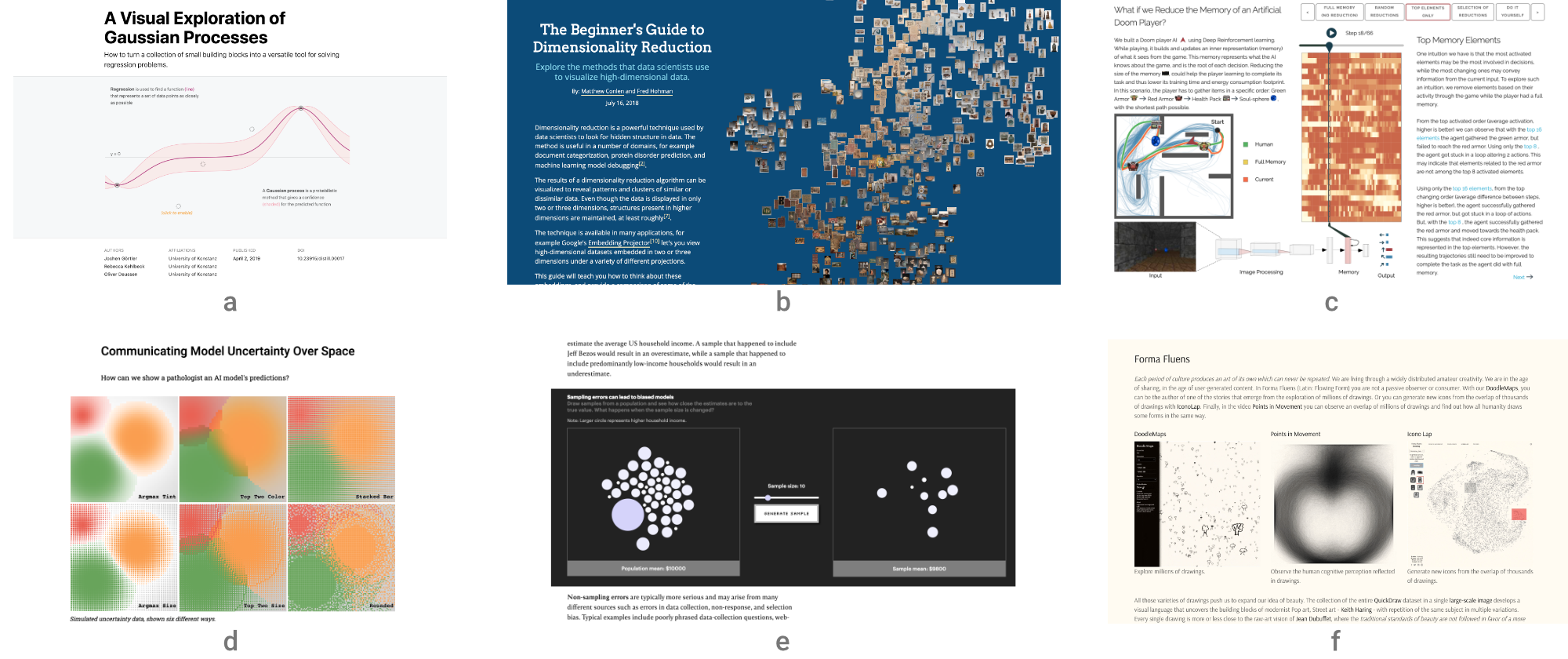

- (a) A Visual Exploration of Gaussian Processes by Görtler, Kehlbeck, and Deussen (VISxAI 2018)

- (b) The Beginner's Guide to Dimensionality Reduction by Conlen and Hohman (VISxAI 2018)

- (c) What if we Reduce the Memory of an Artificial Doom Player? by Jaunet, Vuillemot, and Wolf (VISxAI 2019)

- (d) Communicating Model Uncertainty Over Space by Pearce

- (e) The Myth of the Impartial Machine by Feng and Wu

- (f) FormaFluens Data Experiment by Strobelt, Phibbs, and Martino

Important Dates

Program Overview

All times in ET (UTC -5).

| 12:00 -- 12:05 | Welcome from the Organizers |

| 12:05 -- 1:00 | Keynote: Thomas Wolf (Huggingface Inc.) Facilitating Interactive Explanations with Open-source Libraries: An Introduction to Transfer Learning in NLP and HuggingFace |

| 1:00 -- 1:30 | Session I Comparing DNNs with UMAP Tour Mingwei Li and Carlos Scheidegger How Does a Computer "See" Gender? Stefan Wojcik, Emma Remy, and Chris Baronavski |

| 1:30 -- 2:00 | Break |

| 2:00 -- 2:30 | Session II Théo Guesser Théo Jaunet, Romain Vuillemot, and Christian Wolf Shared Interest: Human Annotations vs. AI Saliency Angie Boggust, Benjamin Hoover, Arvind Satyanarayan, and Hendrik Strobelt A Visual Exploration of Fair Evaluation for ML - Bridging the Gap Between Research and the Real World Anjana Arunkumar, Swaroop Mishra, and Chris Bryan |

| 2:30 -- 3:00 | Session III Anomagram: An Interactive Visualization for Training and Evaluating Autoencoders on the task of Anomaly Detection Victor Dibia What Does BERT Dream Of? Alex Bäuerle and James Wexler Active Learning: A Visual Tour Zeel B Patel and Nipun Batra |

| 3:00 -- 3:05 | Closing Session |

| 3:05 -- 4:00 | VISxAI Eastcoast party |

Hall of Fame

Each year we award Best Submissions and Honorable Mentions. Congrats to our winners!