9th Workshop on

Visualization for AI Explainability

October 2026 at IEEE VIS in Boston, MA

The role of visualization in artificial intelligence (AI) gained significant attention in recent years. With the growing complexity of AI models, the critical need for understanding their inner-workings has increased. Visualization is potentially a powerful technique to fill such a critical need.

The goal of this workshop is to initiate a call for 'explainables' / 'explorables' that explain how AI techniques work using visualization. We believe the VIS community can leverage their expertise in creating visual narratives to bring new insight into the often obfuscated complexity of AI systems.

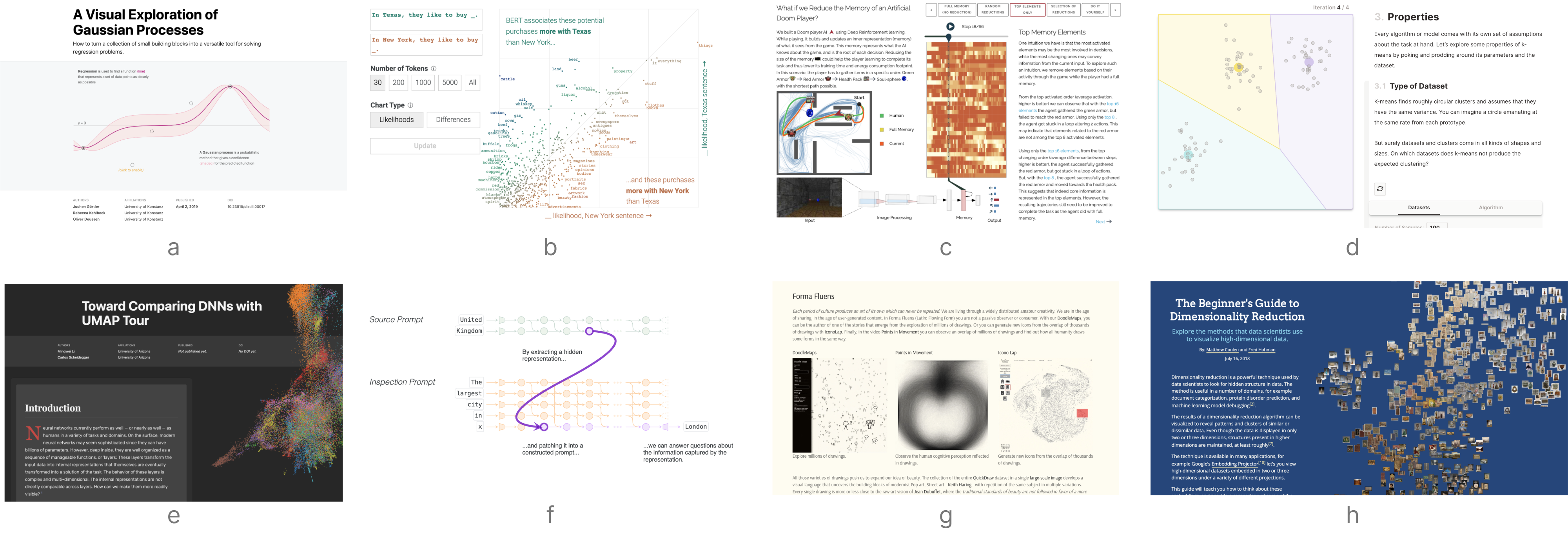

- (a) A Visual Exploration of Gaussian Processes by Görtler, Kehlbeck, and Deussen (VISxAI 2018)

- (b) What Have Language Models Learned? by Adam Pearce (VISxAI 2021)

- (c) Transformer Explainer: LLM Transformer Model Visually Explained by Cho, Kim, Karpekov, Helbling, Wang, Lee, Hoover, Chau (VISxAI 2025)

- (d) K-Means Clustering: An Explorable Explainer by Yi Zhe Ang (VISxAI 2022)

- (e) Can Large Language Models Explain Their Internal Mechanisms? by Hussein, Ghandeharioun, Mullins, Reif, Wilson, Thain, Dixon (VISxAI 2024)

- (f) FormaFluens Data Experiment by Strobelt, Phibbs, and Martino

- (g) The Beginner's Guide to Dimensionality Reduction by Conlen and Hohman (VISxAI 2018)

Important Dates

Program Overview

All times in ET (UTC -5).

Call for Participation

To make our work more accessible to the general audience, we are soliciting submissions in a novel format: blog-style posts and jupyter-like notebooks. In addition we also accept position papers in a more traditional form.

Hall of Fame

Each year we award Best Submissions and Honorable Mentions. Congrats to our winners!